Customer churn prediction is the use of machine learning to assign each account a probability of cancellation inside a defined future window. Production-grade models in 2026 hit 70 to 85% accuracy. The hard part isn't the model. The hard part is what happens in the next 24 hours after a flag fires, and that's where most churn prediction programs quietly die.

I've spent two decades watching companies invest seven figures in predictive infrastructure and recover almost none of it. The failure pattern is consistent: the data team ships a model with respectable AUC, leadership claps, the dashboard goes live, and six months in the predicted churn happens anyway. Nobody knew which CSMs owned which flagged accounts, what offer they were authorized to make, or how to measure whether the save attempt worked. The prediction was right. The operating system around it was missing.

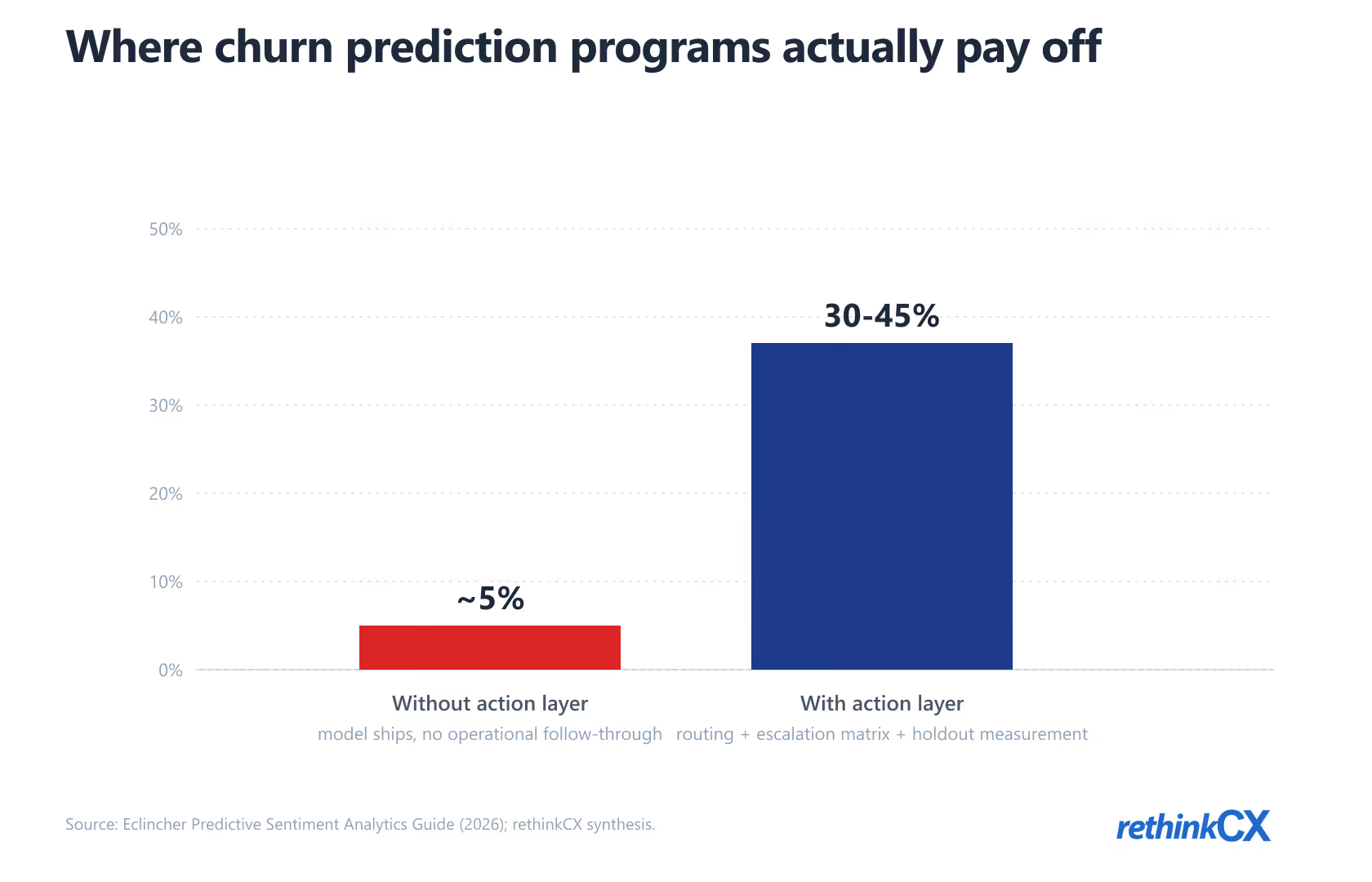

This guide covers what actually matters in 2026: the four churn prediction models worth your time, the data that genuinely moves the needle, the six-step build process, and the post-prediction action layer that separates the teams who recover 30 to 45% of at-risk revenue from the teams who recover almost none of it. The strategic stakes for customer churn prediction and prevention are real. Forrester's 2025 Global CX Index results found that 21% of brands' CX scores declined year over year, with predictive customer intelligence emerging as the operational separator between brands that recover at-risk revenue and brands that watch it walk out the door.

What customer churn prediction actually is, and what it isn't

The shortest honest definition: churn prediction is a per-account probability score, generated on a regular cadence (daily or weekly), with a clear label definition for what counts as "churned" and a clear time horizon for the prediction window. Everything else is either feature engineering, model selection, or operational machinery built around that core score.

What it isn't: a substitute for retention strategy, a replacement for talking to customers, or magic. The score answers "which accounts are at risk right now?" It does not answer "what should we do about it?" or "is the underlying product problem worth fixing?" Those are strategic questions that prediction can inform but not resolve.

Two distinctions matter from the start. First, prediction is forward-looking; analysis is backward-looking. Prediction tells you who's about to leave. Analysis tells you why, in aggregate, people have been leaving. Both are useful. They're different work, requiring different data, and most teams confuse them.

Second, prediction without action infrastructure is a vanity exercise. The score has economic value only when it triggers a specific intervention (an outreach call, an offer, a feature unlock, an executive sponsor escalation) with measurable ownership of who acts and when. A dashboard full of risk scores nobody acts on is just an expensive way to feel informed. We covered the upstream measurement framing in our customer churn complete guide; this post picks up where that one ends.

The four model families that matter in 2026

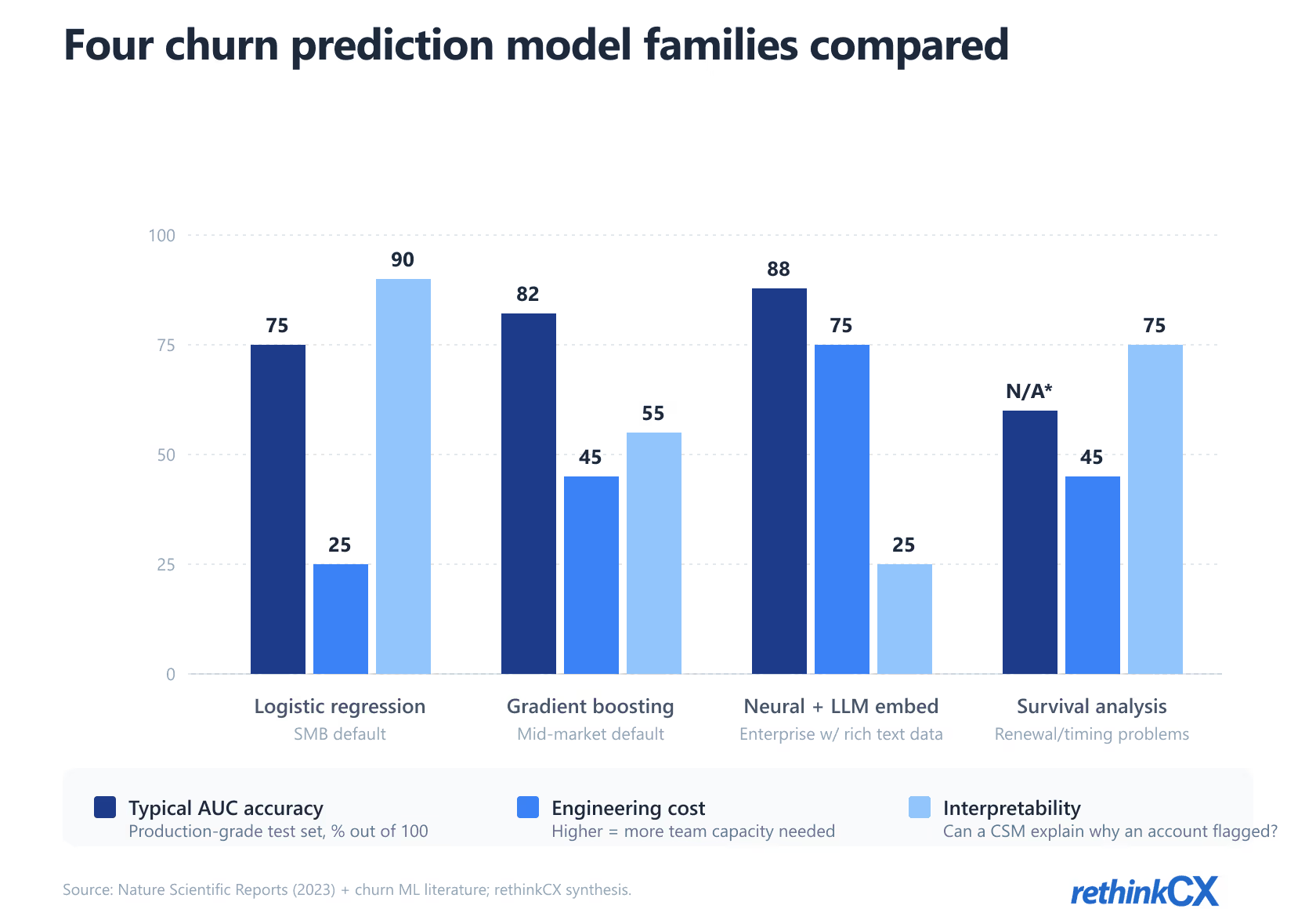

Most "best churn prediction model" content overweights the question. For 90% of businesses, the model family choice matters less than the data quality and the action layer. That said, here's the practitioner version of the tradeoffs.

Logistic regression: the boring one that still wins for most SMBs

Logistic regression assigns a churn probability based on a weighted combination of input features. It's interpretable (each feature's coefficient tells you how much it pushes the score up or down), fast to train, and easy to maintain. Modern variants like elastic-net regularization handle moderate feature counts without overfitting.

The case for it in 2026: if your business has fewer than 10,000 active accounts, a clean event taxonomy, and a small data team, logistic regression with 15 to 30 well-engineered features will rank-order risk approximately as well as anything more sophisticated. The lift from gradient boosting at this scale is usually 3 to 6 percentage points of AUC. Real, but rarely worth the engineering cost for a team without a dedicated MLOps function.

Gradient boosting (XGBoost, LightGBM): the 2024-2026 default

Gradient boosting builds a sequence of decision trees, each correcting the errors of the previous one. XGBoost and LightGBM are the two production-grade implementations. They handle non-linear feature interactions, mixed numeric and categorical data, and missing values without much preprocessing. For mid-market subscription businesses with 10,000 to 500,000 accounts and a working data team, this is the default in 2026.

Accuracy gains over logistic regression typically run 5 to 10 percentage points of AUC on real-world churn datasets. The cost: model interpretability drops. SHAP values help, but you can no longer hand a CSM a one-line explanation of why an account is flagged. That tradeoff matters more than data scientists usually admit, and the action-layer section below explains why.

Neural networks layered with LLM embeddings: when conversational data matters

The biggest accuracy gains of 2024 to 2026 came from incorporating unstructured conversational signal. CSM call transcripts, support ticket text, and chat logs carry early-warning signal that structured event data misses by weeks. The honest mechanic: you embed the conversational text using a modern LLM, then feed those embeddings into a neural model alongside your structured features.

A customer whose CSM hears "we're evaluating options" on a call is, per multiple practitioner reports synthesized in 2025-2026 industry analyst coverage, four to six times more likely to churn within 90 days than the population baseline. That signal exists nowhere in your structured event data, only in the call. Until LLM embeddings made the conversational data tractable, that signal was wasted. The technical underpinnings overlap with our broader treatment of machine learning for customer insights, which covers the embedding architecture in more depth.

When this approach wins: enterprise SaaS with rich CSM coverage, support orgs with high-quality ticket text, conversational AI deployments where chat logs are clean. When it doesn't: SMB businesses with thin conversational coverage, where the embedding signal is sparse and noisy.

Survival analysis: when timing matters more than a binary outcome

Survival models (Cox proportional hazards, accelerated failure time) predict the timing of churn rather than the binary yes-or-no. The output is a survival curve per account: probability of still being a customer at 30, 60, 90, 180 days.

For most operational use cases, the binary "will they churn in the next 90 days?" question is sufficient and survival analysis is overkill. But for two specific cases it's the right tool: contract-renewal forecasting where the renewal date varies per account, and lifetime value modeling where the integral under the survival curve drives the LTV calculation. If those use cases describe your business, the analytics team should be running survival models alongside the primary prediction pipeline.

The right way to choose between these four is by data shape and operational fit, not by accuracy ceiling. Most teams over-index on accuracy and under-index on the questions of interpretability, retraining cost, and how the score will be consumed by the people who act on it.

The data you actually need (and the data everyone wishes they had)

The feature set determines accuracy more than the model family. Here's the practitioner version of what to instrument, ordered by leverage.

The non-negotiable feature set

Every churn prediction model needs four data classes: usage cadence (logins, feature engagement, time-in-product, session frequency), billing events (failed payments, downgrades, plan changes, contract renewal proximity), support history (ticket volume, severity, resolution time, recent escalations), and explicit feedback signal (NPS, CSAT, post-interaction surveys, in-product satisfaction prompts).

Without these four, you don't have a churn prediction model. You have a guess wearing technical clothing. Most teams underestimate the third category in particular. A spike in support ticket volume in the 30 days before a renewal is one of the strongest single predictors in any subscription business; underweighting it costs accuracy. The structural KPIs that feed these data classes are covered in our 22 customer service KPIs guide, which maps the metrics to the operational signals churn models actually need.

The high-leverage features most teams skip

Three features routinely separate good models from production-grade ones. CSM call sentiment (derived from transcript analysis using modern speech-to-text plus LLM scoring) typically lifts AUC by two to four points when the CSM coverage is real. Support reply latency (how long the customer waits for a meaningful response, not just acknowledgment) correlates with churn more strongly than ticket count alone. And login-streak breaks (the first time an active user goes 14+ days without logging in) is a leading indicator weeks ahead of cancellation.

The pattern: the high-leverage features are operationally inconvenient to capture but cheap to use once you have them. Most teams skip them because the data engineering work is across team boundaries (call recording lives with sales tools; ticket reply latency lives with support tools), not because the features don't work.

The features that promise more than they deliver

Web session recordings and scroll-depth heatmaps consistently underperform expectations as churn-prediction features. They're useful for product analytics and conversion optimization; they add little to a churn model. Skip them in v1. If you're tempted to add them in v2 because someone on the team thinks they should help, run an ablation study. Feature importance will tell the truth.

We've covered the broader question of what signals to capture in the voice of customer programs guide; the prediction-specific subset is what matters here.

How to build a churn prediction model: the honest 6-step version

The published "build a churn prediction model in N steps" guides are mostly the same workflow with different numbers. Here's the version that maps to what actually breaks in production.

1. Define your churn event precisely. This is the most-skipped step and it breaks everything downstream. "Churned" needs a calendar definition that any analyst can apply consistently. For example: "no active subscription on the first day of the calendar month following the cancellation date." Vague definitions ("they stopped using it") produce models that look great in training and fail in production because the label was inconsistent.

2. Build the labeled training set with proper temporal cutoffs. Random train-test splits are the most common silent error in churn modeling. Customer behavior has time structure; if a row from January and a row from June end up on opposite sides of the split, the model learns from the future to predict the past. Use temporal splits (train on Jan-Sep, test on Oct-Dec) or you will ship a model that looks 92% accurate in validation and 68% accurate in production.

3. Engineer the feature set with the "what would a CSM actually look at" filter. Every feature in the model should be one a thoughtful CSM, given the account in front of them, would consider. This isn't a constraint; it's a quality check. Features that pass the filter are interpretable, defensible, and survive retraining cycles. Features that don't (obscure ratios, multi-step derived signals nobody can explain) tend to be noise that the model overfits to.

4. Pick the model family using the decision logic in the previous section. Don't pick by accuracy ceiling. Pick by data shape, team capacity, and how the score will be consumed. A logistic regression with 78% AUC that the CSM team trusts will save more revenue than a neural network with 87% AUC that nobody understands.

5. Validate against held-out cohorts using temporal splits and production-realistic metrics. Accuracy and AUC are starter metrics. The metrics that matter operationally are precision-at-top-K (of the top 100 flagged accounts, how many actually churn?), and lift-over-baseline (how much better than random selection is the model at finding churners?). Both are what the action layer cares about.

6. Calibrate the threshold for action, not just for prediction. This is where most teams ship a model nobody uses. The model output is a probability. The action depends on a threshold (above 0.7, escalate; below 0.3, ignore; in between, automated email). The threshold needs joint calibration with the action capacity. If your CSM team has bandwidth for 50 calls a week and the model flags 200 accounts a week, the threshold is wrong. Calibrate the model output to the operational throughput of the team that has to act.

This 6-step process is the difference between a churn model that exists on a dashboard and one that drives revenue retention. Most teams skip steps 1, 2, and 6, ship the model anyway, and wonder six months later why it didn't move the metric.

The post-prediction action layer: where most efforts die

Here's the contrarian observation that drives this entire post: the model accuracy gap between a mediocre churn predictor (70% AUC) and an excellent one (87% AUC) matters less than the gap between any model with an action playbook and any model without one.

I've watched the same pattern repeat at multiple mid-market subscription orgs. The data team ships a churn model with respectable accuracy, leadership signs off, the dashboard goes live, and predicting customer churn becomes a quarterly leadership ritual while the predicted churn keeps happening anyway. Save rates on flagged accounts in these failed deployments run near zero. Save rates in deployments where the action layer is engineered run 30 to 45%, and sometimes higher in B2B accounts with executive sponsorship available. The Gainsight State of Customer Churn in 2024 report found that poor service ranks among the top customer-cited reasons for leaving, but the underlying lever is rarely "service quality in general." It's the quality of the response in the 30-day pre-churn window that the model is supposed to surface.

The action layer breaks down into three operational questions, all of which need answers before the model goes live.

The 24-hour ownership question

Who owns the next action when an account flags above the action threshold? Not "Customer Success owns it." That's not specific enough. Which CSM, by what trigger, with what SLA? In failed deployments, the answer is some version of "we'll figure it out." In working deployments, the answer is a routing rule that maps account segments to specific CSMs with a 24-hour response SLA from the moment the flag fires.

The escalation matrix

Not every flagged account warrants the same intervention. The matrix that works in practice has three tiers. Tier 1 (lower-risk, smaller-account, automated email): onboarding nudge, feature tip, satisfaction check. Tier 2 (mid-risk, mid-tier account): CSM call within 48 hours with a documented playbook for the conversation. Tier 3 (high-risk, strategic account): executive sponsor reaches out within 24 hours with a business review framing. Each tier has a different cost structure and a different expected save rate. Mapping risk score plus account value to the right tier is half the operational work.

The save-rate measurement everyone gets wrong

Measuring "did we save the account?" is harder than it looks. The temptation is to compare flagged-and-saved accounts to flagged-and-churned accounts and claim a save rate. That comparison ignores the counterfactual: those accounts might have stayed anyway. The honest measurement requires holdout cohorts. A small percentage of flagged accounts get no intervention (the control group) and you compare retention rates between treated and untreated cohorts. Most teams skip the holdout because it feels uncomfortable to deliberately not act on flagged accounts. Without it, you can't tell whether your interventions are working or whether you're just intervening on accounts that would have stayed anyway.

These three operational questions (ownership, escalation, measurement) determine whether the prediction infrastructure produces revenue or just dashboards. Build them before the model goes into production, not after.

Build vs buy: honest tradeoffs for 2026

The build-vs-buy question for churn prediction in 2026 has a sharper answer than it did three years ago. The vendor ecosystem matured. Build only when three conditions are all true.

Build wins when you have: a mature in-house data team with at least one MLOps engineer; 50,000+ active accounts (below this scale the marginal accuracy gain from custom modeling doesn't justify the engineering cost); and a custom event taxonomy that off-the-shelf vendors can't ingest cleanly without months of integration work. Most enterprises with all three conditions still benefit from buying a vendor for the action-layer machinery (workflow routing, CSM-facing UI, integration with CRM and ticketing) and building only the model itself.

Buy wins in almost every other case. The customer churn prediction software vendors worth shortlisting in 2026 include ChurnZero, Gainsight, Pecan, Vitally, Akkio, and Retently. Each is strong in a different segment. Gainsight for enterprise CS-led motions. ChurnZero for mid-market product-led. Pecan for accounts where the data team wants to plug into an existing data warehouse. Vitally for product-led with heavy in-app intervention. Akkio for teams that need a no-code modeling layer. Retently for NPS-anchored prediction. The right choice depends on existing stack and operational style. The honest economics are worth keeping in mind too. The often-cited "5x more expensive to acquire than retain" rule traces back to Frederick Reichheld's 1990 Harvard Business Review article on customer defections in services businesses, and modern analyses generally land in the 5-10x range for B2B SaaS. That math justifies almost any reasonable investment in this category.

The honest version of the build path: most teams who build end up rebuilding the model twice and shipping production three years late. The first version overfits, the second underperforms vendor benchmarks, and the third version is functional but cost two engineers' salaries for what a $40K annual vendor contract would have delivered in month two. Build only when the strategic case is overwhelming.

The org design question: where churn prediction should live

The most common org design for churn prediction is wrong: putting it inside Customer Success. CS is reactive by design. They own the customer relationship, they respond to issues, they run QBRs and renewal conversations. That's a single-account cadence. Churn prediction is proactive and operates at portfolio cadence, scoring the entire base on a daily or weekly cycle and surfacing actionable subsets. The two muscles are different.

The org design that works in practice splits the function. RevOps or Data Science owns the model, the pipeline, and the score. They handle data engineering, retraining, performance monitoring, and threshold calibration. CSMs are consumers of the score, not owners. Their job is to act on flagged accounts using the playbook the model triggers, measure save outcomes, and feed those outcomes back as labels for the next training cycle.

This split mirrors how mature analytics functions are structured in adjacent domains. Marketing teams don't own the attribution model; analytics owns the model and marketing consumes the output. Same logic applies here. The teams that put churn prediction inside CS end up with a model that nobody fully owns and a CS team that resents the "extra work" of acting on alerts they didn't ask for. The teams that split the function get sustained execution and a feedback loop that improves the model over time.

The right structure to navigate this kind of operational redesign is exactly the kind of work covered in our CX strategy advisory, where churn prediction is one of the workstreams the operating model needs to absorb cleanly.

Three things I'd do differently if starting today

Looking back at every churn prediction implementation pattern I've seen succeed or fail, three principles separate the working programs from the abandoned ones.

Skip the perfect model. Ship a 70%-accurate model with a clear action playbook on Day 1. The temptation is to delay launch until the model is "ready." The model is never ready. Ship the v1 model with the v1 action playbook, measure outcomes for 90 days, and iterate on both in parallel. The teams that wait for model accuracy before building action infrastructure end up with a beautifully accurate model that nobody acts on.

Instrument the action side before the prediction side. Build the routing logic, the CSM playbook, the escalation matrix, and the holdout-based save-rate measurement first. Then point the model at the action layer when the action layer is ready to consume scores. Reverse the order and you ship a dashboard. The action layer is the bottleneck; the model is the easy part.

Treat involuntary churn as a separate workstream, not part of the prediction pipeline. Failed payments, expired cards, declined renewals: this category accounts for 20 to 40% of total churn in subscription businesses (per Paddle's research on involuntary churn) and it's almost entirely fixable with smart payment retries, automated card-update flows, and a clean dunning sequence. Stripe's published data on Smart Retries shows recovery rates between 50 and 70% for this category. None of that requires a prediction model. Run the involuntary-churn fix first, then build prediction for the voluntary-churn population that remains.

The pattern across all three: the prediction model is the visible piece, but the operational infrastructure around it is what determines whether the program produces revenue. Build the infrastructure with the same rigor you'd build the model itself, and the program works. Skip the infrastructure work and the model becomes another expensive dashboard nobody reads.

For the broader retention strategy frame that this prediction work plugs into, our customer loyalty psychology and strategies post covers the upstream investment thesis, and the subscription retention playbook maps the operational workstreams that depend on the prediction signal. For teams that want to benchmark where their CX operating model sits today, the CX Maturity Assessment covers the prediction-and-action capability dimension explicitly.

The single sentence to take away: in 2026, churn prediction is solved at the model layer and unsolved at the action layer. Invest accordingly.