Conversational AI for customer service is software that holds natural-language conversations with customers across chat, email, voice, and messaging to resolve their requests without a human agent in the loop. In 2026, three model families dominate: RAG-powered chatbots that answer from your knowledge base, agentic AI that executes multi-step actions across systems, and voice AI that handles phone calls end-to-end.

Every article ranking on this query is from a vendor selling something. IBM, Salesforce, Ada, Vonage, Intercom, Sierra, Decagon, Dialpad, AWS, Soprano. Ten of ten in the top 10 are platform pitches dressed as explainers. The framing they share is the 2022 one: deploy conversational AI to deflect support volume. In 2026 the question isn't deflection rate. It's which of the three model families you're actually buying, and where each one fails.

This guide is from a vendor-neutral CX advisory that doesn't sell software. It covers the three model families, what the technology has actually delivered against the Gartner forecasts of 2022, the use cases that work, the intent classes where conversational AI shouldn't live, and the eight-step deployment playbook the keyword promises but the vendor explainers skip. The strategic context for the broader AI in CX implementations question sits in our adjacent pillar; this post picks up where that one ends, on the customer-facing side.

What conversational AI for customer service actually is

The shortest honest definition: conversational AI is the family of language-model-powered systems that hold open-ended conversations with customers and either answer their questions or take action on their behalf. Three architectural choices distinguish modern systems from the rule-based chatbots of 2018-2022: large language models replace decision trees, retrieval-augmented generation grounds answers in a current knowledge base instead of a frozen training corpus, and agentic frameworks let the model call tools and execute multi-step workflows across your stack.

Two distinctions matter from the start. First, conversational AI is the customer-facing side. The agent-side equivalent is co-pilot software, which sits beside a human agent and drafts replies or surfaces context. They share the same underlying models. They are not the same product, and the deployment economics diverge sharply. We covered the agent-assist tools that sit beside the human in a separate post; this one is about the customer-facing side only.

Second, the model family matters more than the vendor brand. A RAG-chatbot from Intercom and a RAG-chatbot from Ada will perform within a few percentage points of each other on the same intent set. An agentic AI from Decagon and a RAG-chatbot from IBM will differ by 30 to 50 points of containment on the same intent set. The category split inside conversational AI is bigger than the vendor split inside any one category. Most buyers get this backwards, which is how you end up paying for a Ferrari to run errands across the street.

Conversational AI vs. chatbot

The "is conversational AI just a chatbot?" question gets asked at every vendor demo. The honest answer: a traditional rule-based chatbot is a scripted decision tree, and conversational AI is the broader category that uses large language models and retrieval to handle open-ended language. Inside conversational AI, the meaningful distinction in 2026 is between RAG-chatbots, agentic AI, and voice AI — three families with different deployment economics, not three labels for the same thing. Calling all of it "a chatbot" is the framing that locks teams into 2022 deflection thinking instead of 2026 end-to-end resolution.

The three model families: RAG-chatbot, agentic AI, voice AI

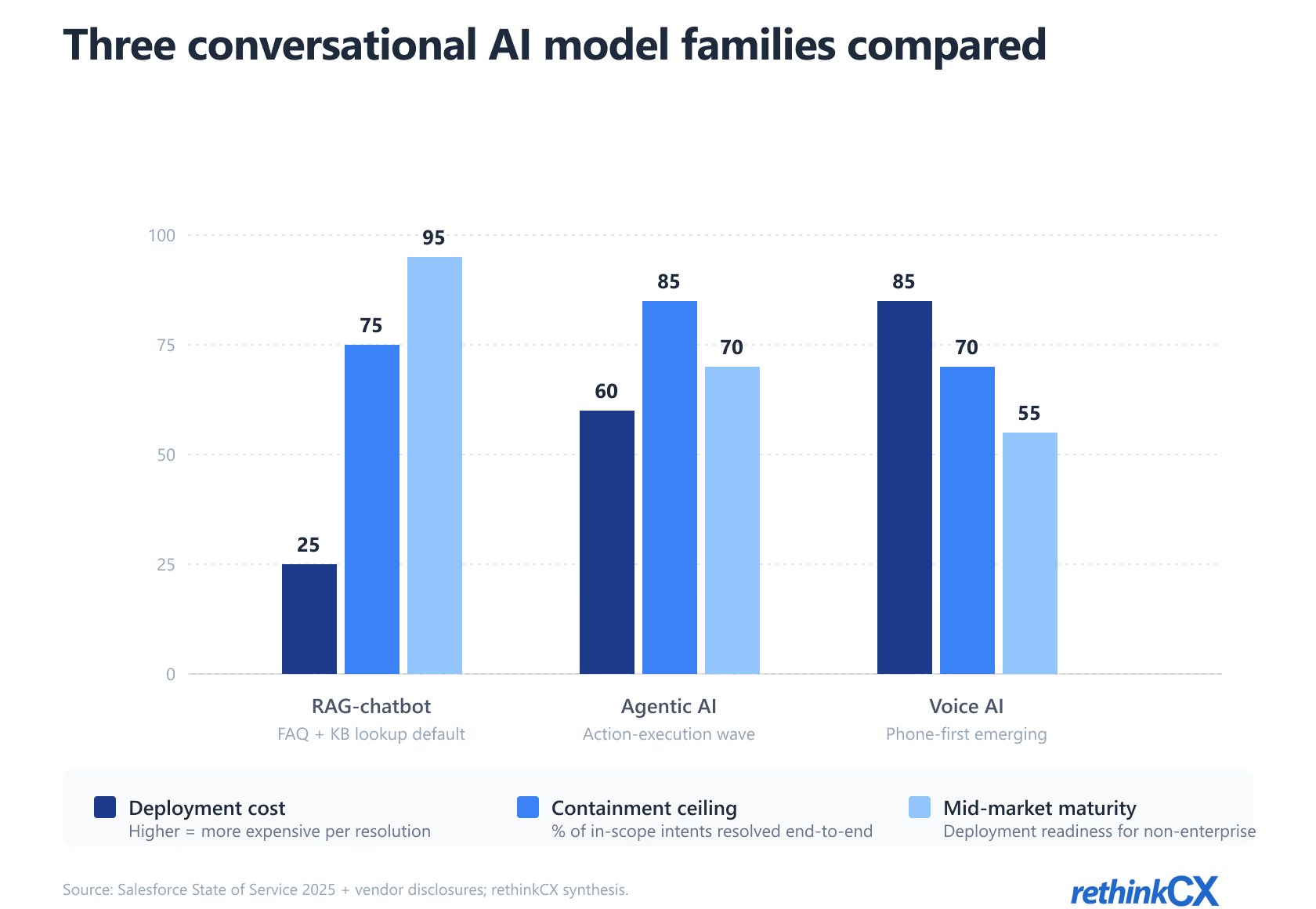

Most "what is conversational AI" content treats the category as one thing. It isn't. There are three model families operating in 2026, with different deployment costs, different escalation patterns, and different failure modes. Picking the wrong family is the single most common deployment mistake we see.

| Family | Typical use cases | Deployment cost band | Escalation behavior | Example vendors |

|---|---|---|---|---|

| RAG-chatbot | FAQ deflection, knowledge-base Q&A, policy lookups | $-$$ | Hands off when off-topic or confidence-low | Intercom Fin, Ada, IBM Watson Assistant |

| Agentic AI | Multi-step actions across systems (refunds, cancellations, address changes) | $$-$$$ | Executes; escalates only on policy or auth boundaries | Decagon, Sierra, Salesforce Agentforce |

| Voice AI | Phone-first inbound and outbound conversational handling | $$$-$$$$ | Hands off mid-call to human agent on signal | Sierra Voice, Vapi, OpenAI Realtime API |

When RAG-chatbot is the right choice

RAG-chatbots win when three conditions hold: high volume in a narrow set of informational intents, a knowledge base that is genuinely current, and clear escalation rules for everything outside the retrieval scope. The classic fit is post-purchase support for ecommerce, policy and FAQ lookup for SaaS, and benefits questions for HR. The economics are mature. The failure mode is well-understood: stale knowledge base in, confidently wrong answer out.

When agentic AI earns its keep

Agentic AI earns its keep when the customer's request requires action, not just an answer. Refunds, cancellations, address changes, plan upgrades, appointment rescheduling. The mid-market wave that started in 2024 with Decagon and Sierra is now real enough to deploy, and the per-resolution economics outrun RAG-chatbots on transactional intents because each successful resolution closes a ticket completely instead of deflecting it to a human.

When voice AI is ready and when it isn't

Voice AI crossed a quality threshold in 2025. The OpenAI Realtime API and the Sierra Voice product line both produce conversational voice that customers cannot reliably distinguish from human agents in blind tests inside controlled domains. What hasn't matured is deployment infrastructure: integration with existing telephony, mid-call escalation that doesn't drop context, and observability into voice transcripts at scale. The capability is ready. The operational bar is one product cycle behind.

What the technology has actually delivered

Gartner's August 2022 forecast was that conversational AI deployments in contact centers would cut $80 billion in agent labor costs by 2026, with one in ten agent interactions automated by 2026 against 1.6% in 2022. The number gets quoted in most articles ranking for this keyword. None of them revisit it, even though we are now in the year the forecast was made for.

The verdict is mixed. On labor displacement, Gartner was directionally right. Per the U.S. Bureau of Labor Statistics' 2024 OES estimates for customer-service representatives, employment in the customer-service-representative occupation declined modestly from its 2022 peak, with the steepest declines in industries (retail, telecom, ecommerce) where conversational AI deployments concentrated first. On the timeline, Gartner was early. The $80 billion figure assumed the agentic-AI wave would reach mid-market scale by 2024. It didn't. Decagon's first major mid-market deployment cohort dates to mid-2024. Sierra's enterprise-scale rollouts hit production in 2025. McKinsey's 2025 State of AI survey puts the empirical reality more starkly: 23% of organizations are scaling agentic AI in at least one business function, but in any given function, no more than 10% are at scale. The economic effect is real and growing, but the wave compressed into 2025-2026 rather than spreading across the four-year window the original forecast assumed.

The 2026 reality is closer to the Salesforce State of Service findings, where high-performing service organizations report meaningfully higher AI containment than the broader population, but the population median sits well under what the 2022 forecast implied. The Zendesk 2026 CX Trends Report tracks a similar gap between leaders and laggards on AI customer service maturity. Both data sets point at the same conclusion: the original Gartner end-state is now a 2027-2028 outcome, not a 2026 one.

The conventional take is that conversational AI's job is to deflect. The actual job in 2026 is end-to-end resolution. The failure mode is no longer "the bot didn't understand," it's "the bot understood and acted on the wrong thing." That's a different deployment problem with a different fix.

Five use cases that work

The use-case lists in vendor explainers are interchangeable. Five categories actually carry the operational weight in 2026.

1. After-purchase status and tracking. RAG-chatbot territory. The customer asks where their order is, the bot reads the order from the commerce platform, queries the carrier, and replies with the tracking detail. This is the highest-containment intent class in conversational AI deployments today; ecommerce brands we've watched roll this out routinely sit in the 60 to 80% containment range once the order-system integration is clean.

2. Refunds, cancellations, and address changes. Agentic AI territory. The 2025-2026 wave. The agent reads the request, validates eligibility against policy, executes the action in the underlying system, and confirms back to the customer. This is the use case that justifies the deployment cost premium for agentic over RAG. The closest precedent in our content is agentic AI in the churn-prevention action layer, where the action-execution tier carries the same architectural pattern.

3. Knowledge-base lookup and policy questions. RAG-chatbot territory, narrower scope. Benefits questions, return policy, warranty terms. The bar is whether the knowledge base is current. If the KB is stale, a human agent reading the same KB will fail too. The bot just fails faster and at higher volume.

4. Inbound voice triage during volume spikes. Voice AI territory, narrow window. Holiday season, product launches, outage events. Voice AI handles the first 30 to 60 seconds of intake, captures intent, and either resolves a top-tier intent or routes the call to the right human queue with full context. The 2025 holiday cycle was the first one with voice AI deployed at material scale on the inbound side; the operators we advised reported meaningful reductions in hold-time-driven abandonment without the staffing premium of seasonal ramp.

5. Cross-channel context handoff. Foundational across all three families. The customer starts a chat, switches to email, calls the next day. Conversational AI maintains the case context across channels so the human agent who eventually picks up the escalation doesn't ask the customer to re-explain. This is the use case that pays for itself even when bot containment is mediocre, because it prevents the single most common CSAT-cratering experience: the customer repeating the problem for the fourth time.

Where conversational AI shouldn't live

We've watched mid-market ops deploy a RAG-chatbot to 80% of inbound chat in week one, watch CSAT crater 18 points in 30 days, and back the model down to 40% by month two. The pattern is recognizable across the multiple advisory engagements we've worked. The deployment wasn't bad. The scope was. Five intent classes belong outside the bot scope from day one, not after a CSAT incident teaches the team where the boundaries are.

Regulated-industry intents with PII restrictions. Medical history changes, financial product enrollment, anything where a misstep triggers a compliance event. The cost of a single regulated-intent error usually outruns a year of containment savings. The pattern we see across the regulated-vertical engagements that actually work: scope the bot to read-only retrieval (policy lookups, eligibility questions, document pointers) and route every transactional intent to human escalation. The bots that try to do more in regulated environments end up in a compliance review within the first two quarters.

Ambiguous escalation tickets. The kind where the agent has to read between the lines. "I just got off the phone with your billing team and I'm not happy" is not a routable intent. Bots route it to billing. The customer needs an empathetic human who recognizes the meta-signal.

Low-volume rare-intent tickets. If an intent appears 20 times a quarter, the training-data ROI is negative. The cost to label, train, and monitor the intent exceeds the labor savings from automation. Send these to humans and stop pretending it's a coverage gap worth closing.

Account-recovery flows. Adversarial users probe bots for social-engineering vectors. The bot's job in account recovery is to fail closed and escalate. Anything else is a security incident waiting to be written up.

High-emotion service-recovery interactions. Empathetic deflection is worse than a 30-second hold time. A customer who has just experienced a service failure does not want a bot's "I understand your frustration." They want a human, fast. The bot should recognize the emotional signal and escalate immediately.

The deployment sequence: an 8-step playbook

The vendor explainers skip this section. The keyword promises an implementation guide; the SERP delivers a feature pitch. The deployment sequence below is what we run with mid-market CX operations adopting conversational AI for the first time. It's drawn from the engagements we've worked across the rethinkCX advisory practice. Every step earns its place because skipping it has a predictable downstream cost that we've watched teams pay.

The eight steps are summarized in the howTo schema in the frontmatter and rendered for readers below.

1. Categorize 90 days of tickets into an intent taxonomy. Before picking a model or a vendor, sample 90 days of inbound tickets and tag every one into a flat intent taxonomy of 25 to 60 intents. The taxonomy answers two questions the vendor pitch will not: which intents have the volume to justify automation, and which sit in the failure-mode list above. Most teams skip this step and pay for it 90 days later when the model hallucinates across intent boundaries. The intent-classification techniques here overlap with the ML approaches behind intent classification, which covers the embedding architecture in more depth.

2. Prepare the data layer. Audit the knowledge base for stale articles, conflicting answers, and policy gaps. Score the historical ticket text for quality and language coverage. Most KBs we audit have a 15 to 30% staleness rate that the customer-facing bot will surface confidently and incorrectly until someone fixes the underlying content. The upstream signal capture overlaps with what mature voice of customer programs already produce.

3. Define escalation rules before the bot scope. Decide what triggers a handoff to a human before deciding what the bot handles. Triggers we use: customer sentiment, the phrase "talk to a person," repeated questions in one session, any intent on the failure-mode list. Defining the exit door first forces the bot scope to be the set of intents the bot actually closes, not the set the vendor demo handled.

4. Instrument CSAT measurement by intent class. Measure pre-deployment CSAT by intent class. Post-deployment, track CSAT, containment, and resolution rate per intent class, not as a blended average. The blended average will look fine while a single high-emotion intent class craters the experience for the segment that complains loudest.

5. Gate pilot-to-production on three written numbers. Containment rate, CSAT floor against the human baseline, resolution rate per intent. Publish the gate before the pilot starts. Most failed deployments fail because the gate was negotiated downward after the pilot underperformed, not because the technology was wrong.

6. Set a quarterly retraining cadence with intent-drift monitoring. The signal to act on is not model accuracy decay but new intents appearing in escalations that were not in the original taxonomy. That's the early warning the taxonomy itself needs an update.

7. Select a vendor against five fixed questions. Which model family are you, what's the integration depth into our existing stack, what's the per-resolution price band at our volume, what's the retraining model, and what's the disengagement cost if we leave. By the time you're talking to vendors, the intent taxonomy and the gate thresholds are already in hand. Anything else the vendor wants to discuss is marketing.

8. Run the 90-day audit when the SOW says the rollout is complete. Audit against the gate thresholds intent by intent. Two patterns are common: the bot is exceeding gate on routine intents and underperforming on a small set where the taxonomy missed an edge case, or the bot is meeting blended targets while one segment of customers is quietly degrading.

If we were starting today, we'd spend the first two weeks of any engagement on step one and the next two on step three. Vendor selection comes seventh on this list for a reason.

How to choose a vendor (without listing vendors)

This section is what every other article on this query is. We're not going to do a vendor listicle. The platforms we actually shortlist live in a separate post that gets refreshed annually. What belongs here is the criteria framework that makes the shortlist relevant to your specific deployment.

The five questions in step seven above are the baseline. Three of them deserve expansion.

Pricing reality versus list price. Per-resolution pricing in 2026 ranges roughly from $0.30 for high-volume RAG-chatbot resolutions to $4-$8 for agentic AI resolutions on transactional intents. Voice AI per-minute pricing sits in a separate band. List price is rarely the price you pay. Mid-market teams routinely negotiate 30 to 50% off list when contract value clears six figures, especially with the agentic-AI vendors who are still in the customer-acquisition phase of their growth curve.

Integration depth. The question that gets asked too late. A vendor demo runs against a clean sandbox. Production runs against your tangled stack of CRM, ticketing, commerce, billing, and identity systems. Ask for two named customer references in the same vertical with the same systems combination as yours. If the vendor can't produce them, the integration risk is yours to underwrite.

Disengagement cost. Most contracts include data-portability clauses that read fine on signing and turn out unworkable in execution. The historical conversation logs, the trained intent classifier, the case object structure — the question is whether you can leave with all three or whether you're rebuilding from scratch on the next vendor. We've seen teams stay with a worse-fit vendor for two years because the disengagement cost was higher than the switching benefit.

If your team is mid-engagement on any of this and wants a second pair of eyes, rethinkCX's CX technology advisory sits in the vendor-neutral seat by design.

The 2026-2027 trajectory

The next 12 to 18 months are about the failure-mode list shrinking, not the use-case list expanding. Voice AI deployment infrastructure will catch up to voice quality. Regulated-industry deployments will get production-ready agentic frameworks with the audit trails compliance teams need. Multimodal handoffs (chat to voice, voice to video, all carrying case context) will become standard rather than premium-tier. The model families themselves are stable. The deployment surface around them is what's still maturing.

The teams that will lead in 2027 are the ones who deploy conservatively in 2026 — narrow scope, hard-gated CSAT thresholds, intent taxonomies that get updated quarterly. The teams that will be writing post-mortems are the ones who deploy 80% of inbound to a bot in week one because the vendor demo looked good. For teams that want a structured way to benchmark where their CX operating model sits on the maturity curve, the CX Maturity Assessment covers the AI-deployment capability dimension explicitly.